PhaseCam3D

Passive Single-View Depth Sensing

3DImaging is critical for a myriad of applications such as autonomous driving, robotics, virtual reality, and surveillance. The current state of art relies on active illumination based techniques such as LIDAR, radar, structured illumination or continuous-wave time-of-flight. However, many emerging applications, especially on mobile platforms, are severely power and energy constrained. Active approaches are unlikely to scale well for these applications and hence, there is a pressing need for robust passive 3D imaging technologies.

Multi-camera systems provide state of the art performance for passive 3D imaging. In these systems, triangulation between corresponding points on multiple views of the scene allows for 3D estimation. Stereo and multi-view stereo approaches meet some of the needs mentioned above, and an increasing number of mobile platforms have been adopting such technology. Unfortunately, having multiple cameras within a single platform results in increased system cost as well as implementation complexity.

3D Imaging

More Info

to help with the depth estimation.

PSF Engineering

More Info

End-to-end Design

More Info

PhaseCam3D

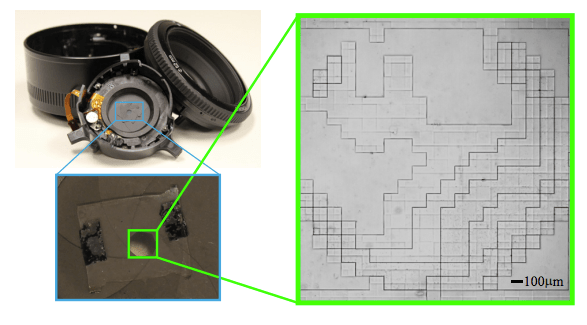

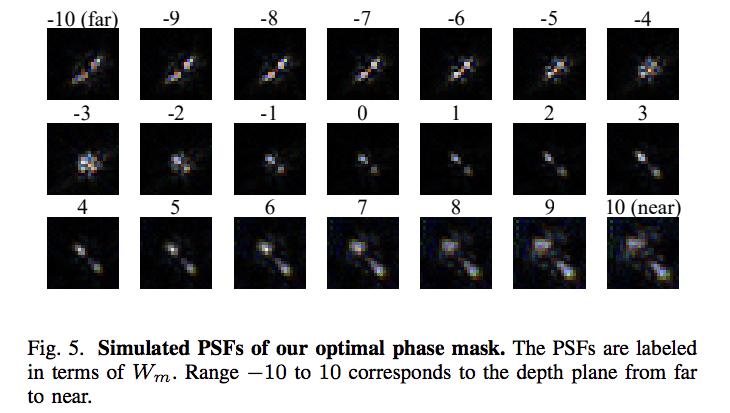

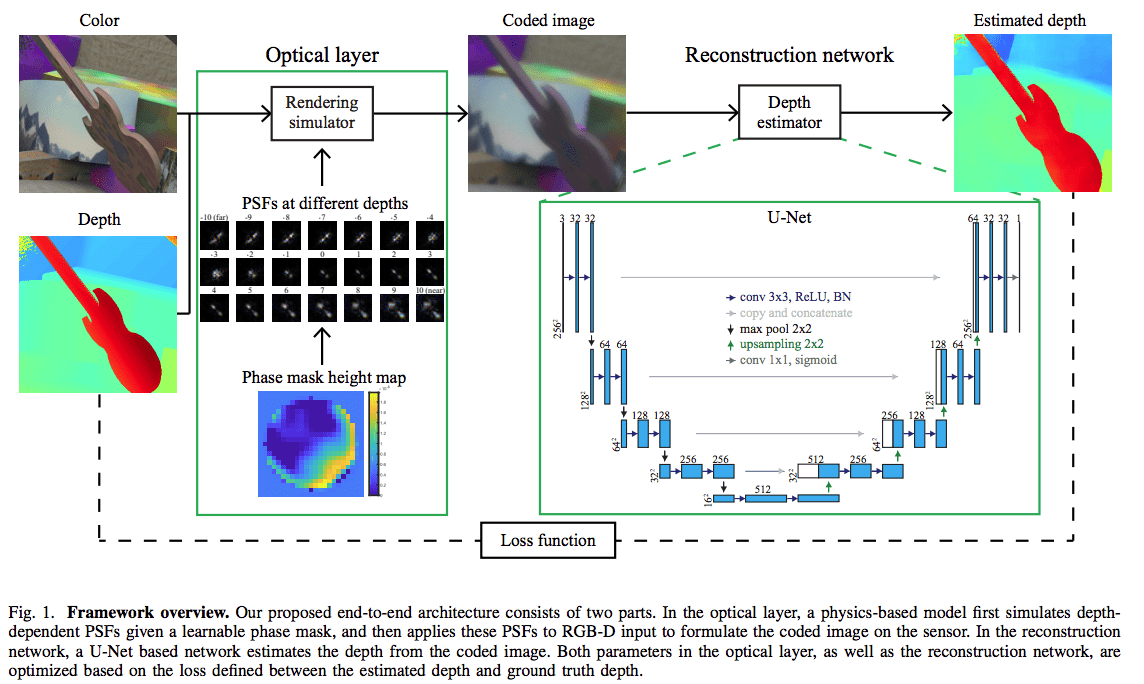

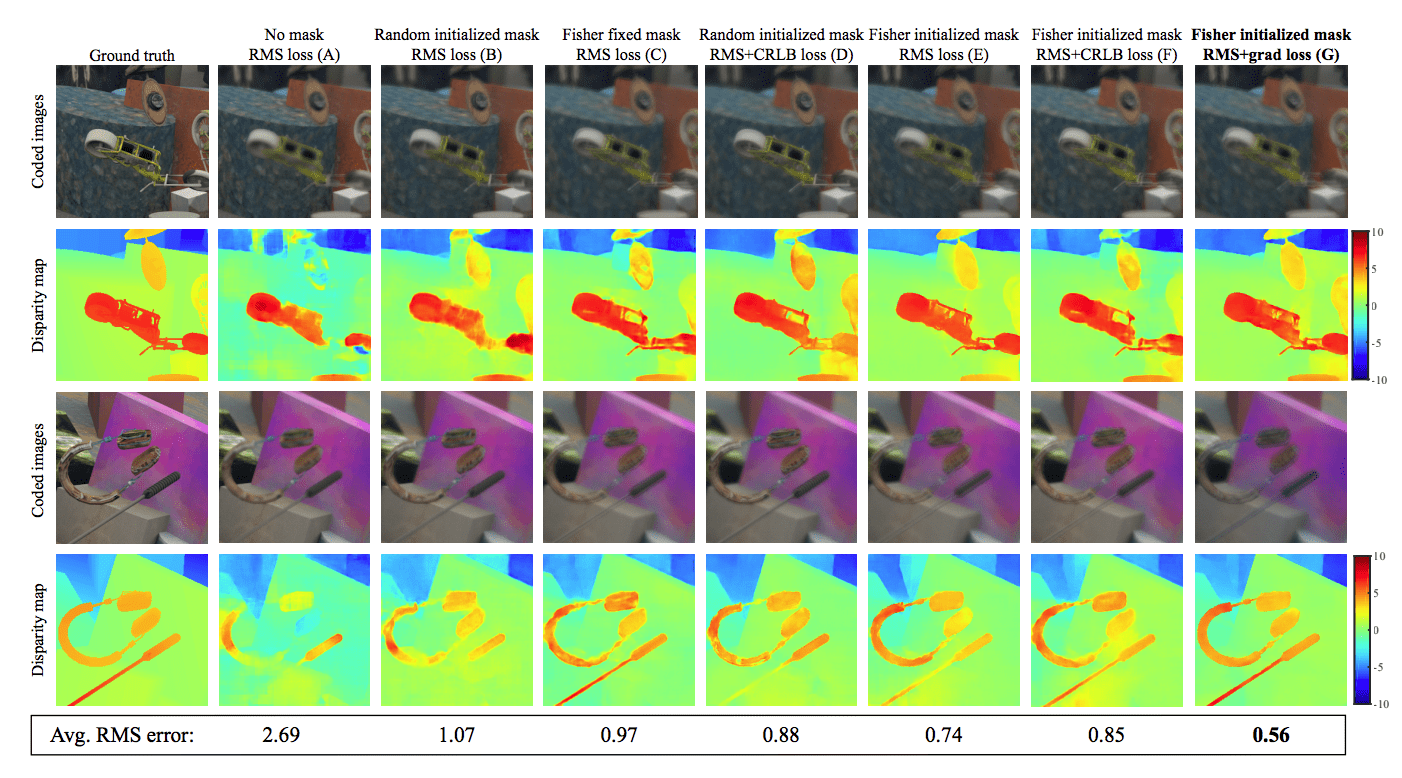

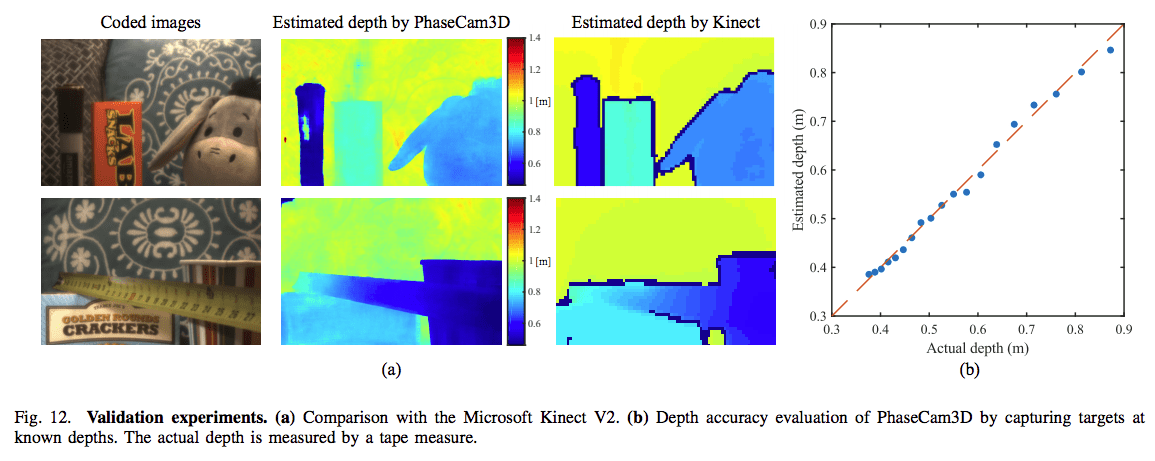

We propose PhaseCam3D, a passive, single-viewpoint 3D imaging system that jointly optimizes the front-end optics (phase mask) and the back-end reconstruction algorithm. Using end-to-end optimization, we obtain a novel phase mask that provides superior depth estimation performance compared to existing approaches. We fabricated the optimized phase mask and build a coded aperture camera by integrated the phase mask into the aperture plane of the lens. We demonstrate compelling 3D imaging performance using our prototype.

People

Yicheng Wu

Vivek Boominathan

Aswin Sankaranarayanan

Ashok Veeraraghavan